A Major Performance Upgrade That Narrows the Gap with Windows

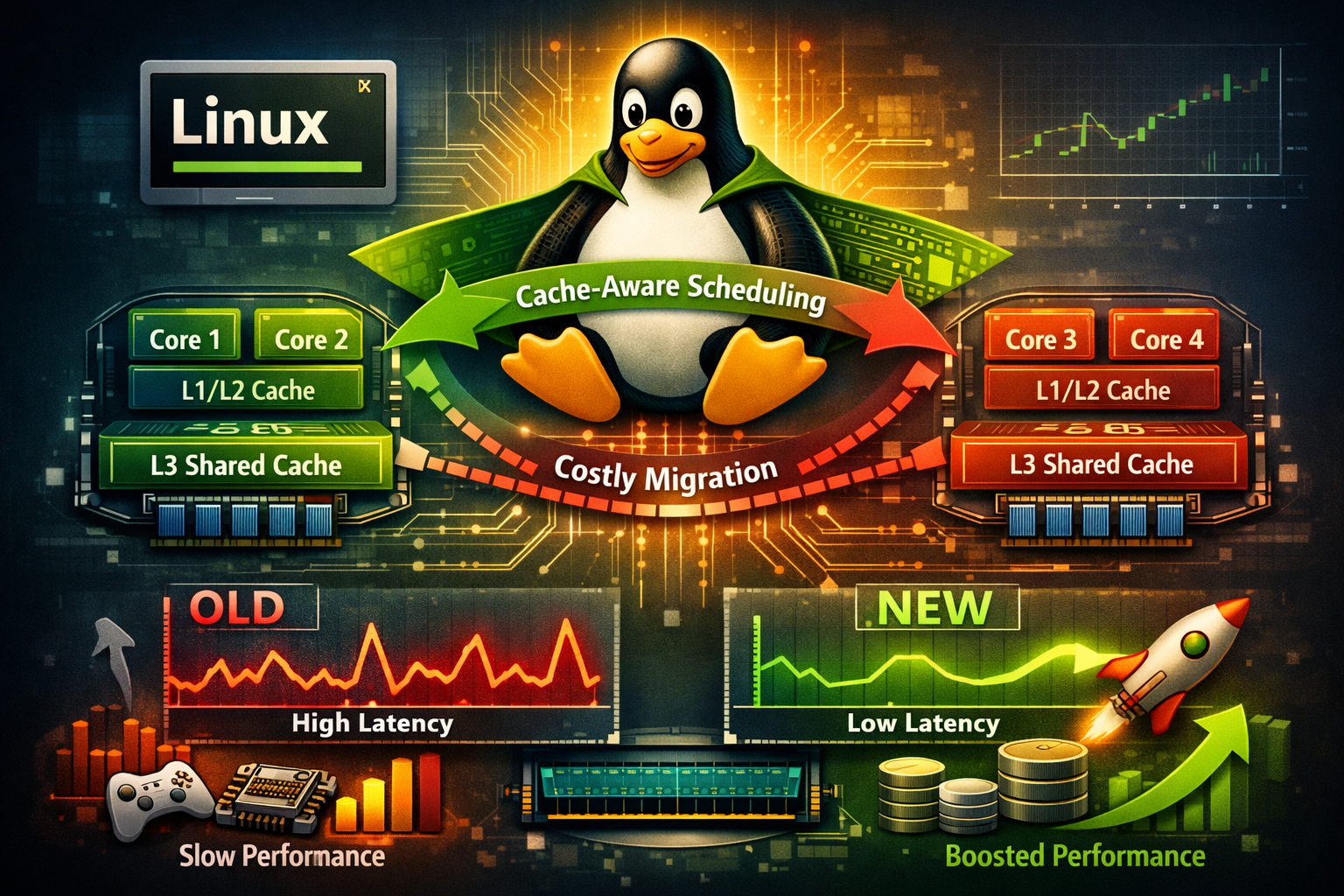

The robust and adaptable kernel of the Linux operating system has long been admired. The Linux scheduler has been commended for years by developers and system engineers for effectively allocating workloads among CPUs. Cache awareness, a crucial optimization, was absent, nevertheless.

This is now being altered by a new feature called Cache-Aware Scheduling. Performance on multi-core computers is greatly enhanced by this update, which helps Linux better comprehend how contemporary CPUs arrange their memory caches.

This enhancement could result in considerable speed increases for developers, gamers, and enterprise workloads alike, given the rising complexity of current CPUs.

What Is Cache-Aware Scheduling?

At the core of every operating system lies a component known as the CPU scheduler. The scheduler decides which task or thread runs on which CPU core and for how long.

Modern processors are built with multiple layers of cache memory designed to speed up data access:

- L1 Cache: Very small but extremely fast, located inside each core.

- L2 Cache: Slightly larger but still private to the core.

- L3 Cache (Last Level Cache or LLC): Shared between groups of CPU cores.

When a thread moves from one core to another that does not share the same cache, its data may no longer be available in the cache. As a result, the processor must fetch the data from main system memory, which is significantly slower.

This process is known as a cache miss, and frequent cache misses can reduce overall system performance.

Cache-Aware Scheduling addresses this issue by keeping related threads close to the same shared cache. Instead of freely moving tasks between cores, the scheduler now respects the processor’s cache layout.

The result is better data locality, fewer cache misses, and faster execution.

Why Linux Needed This Upgrade

While Linux has historically had one of the most advanced schedulers in operating systems, Windows introduced stronger cache and topology awareness earlier, particularly with the release of Windows 10.

Microsoft optimized Windows to understand modern CPU designs that include:

- Hybrid architectures with performance cores (P-cores) and efficiency cores (E-cores)

- Complex NUMA (Non-Uniform Memory Access) layouts

- Multi-chip modules with separate cache clusters

These optimizations allowed Windows to better manage workloads on complex CPUs.

With Cache-Aware Scheduling now integrated into the Linux kernel, Linux closes that performance gap. The new system works alongside existing technologies such as:

- NUMA balancing

- Energy-aware scheduling

- Load balancing across cores

Rather than replacing existing scheduling logic, the feature enhances task placement by aligning it with the physical structure of the CPU.

Real-World Performance Improvements

Early benchmarks show promising results. Tests conducted on Intel Sapphire Rapids processors have demonstrated performance improvements ranging from 30% to 45% in certain workloads.

The biggest gains appear in applications that rely heavily on cache efficiency, including:

- Large-scale data analytics

- High-thread compilation workloads

- Microservices with small working data sets

- High-performance computing applications

These workloads depend on rapid memory access, making them especially sensitive to cache behavior.

Benefits for Gaming and Modern CPUs

Gamers may also notice improvements from cache-aware scheduling. Many modern games rely on multiple threads sharing frequently accessed data such as textures, physics states, or AI computations.

By keeping related threads close to shared cache resources, Linux can deliver more consistent frame times and reduced latency.

The feature also benefits newer processor designs, including:

AMD 3D V-Cache CPUs

Processors with 3D stacked cache rely heavily on maximizing cache locality. Cache-aware scheduling helps ensure threads stay close to these large cache pools.

Hybrid Intel CPUs

Intel’s modern chips combine high-performance cores and efficiency cores, which often have different cache arrangements. Smarter scheduling helps workloads run on the most appropriate cores without losing cache efficiency.

Portable Gaming Devices

Handheld gaming systems running Linux-based operating systems, such as SteamOS, could see smoother gameplay and improved battery efficiency thanks to reduced memory access overhead.

The Future of Linux Performance

The addition of Cache-Aware Scheduling marks an important milestone for the Linux kernel. As CPU architectures become increasingly complex, operating systems must evolve to understand hardware topology in greater detail.

By aligning thread scheduling with cache structures, Linux improves performance without sacrificing its traditional flexibility across servers, desktops, cloud environments, and embedded systems.

For developers, gamers, and enterprise users, this upgrade reinforces a simple principle:

When tasks stay close to their data, systems stay close to peak performance.